On a sunny Wednesday morning this March, teachers at Percy Julian Middle School in Oak Park, Ill., slowly filtered into a before-school, all-staff meeting on artificial intelligence.

As teachers fired up their laptops and sipped coffee, the presenters at the front of the school’s cafeteria warned that AI was set to reshape the education space, whether ���Ķ���vlog were ready or not.

“We need to get in front of this,” one speaker said. “You don’t ever want to start a race behind the line. You want to be able to be ahead.”

It’s not an unusual message for teachers to hear these days. Nationally, experts say it’s important for ���Ķ���vlog to learn about AI so they can leverage the technology in their work and show students how to use it responsibly. Half of all teachers said they’ve had at least one training on AI, according to a 2025 survey from the EdWeek Research Center.

But Wednesday’s presentation did feature some unusual messengers. The speakers at the staff meeting weren’t school leaders or outside experts. They were nine 8th graders, who have spent the past year experimenting with the technology under the supervision of Ashley Kannan, a social studies teacher at the school.

“We’re here because AI is going to be a part of our future, our generation,” said Viv Rowell, 14, to the more than 60 assembled ���Ķ���vlog and parents of some of the presenting students.“We’re going to be the first kids who have AI grading our college resumes. It’s just going to be a giant part of our lives.”

Students have perhaps the biggest stake in how AI literacy is taught, but often the least input into those decisions, noted Kannan. Teachers decide what constitutes appropriate use, or even whether to broach the subject at all in class.

“We’re diagnosing something, and we’re not asking the patient,” he said, in an interview.

With the project, Kannan wanted to flip the script. What would students do if they could use AI to reach their own goals? What guardrails would they deem essential?

The result was a group of teenagers who came away with their own nuanced assessments of the technology—and some advice for the adults in their lives.

“I’d rather have my students making those decisions right now than the Pentagon,” Kannan said.

How students went from AI skeptics to frequent users

Kannan has been a teacher for almost 30 years. “I was around when we started with overheads and transparencies,” he said.

He’s seen how schools have hurried to catch up as new technologies have transformed how people seek out information, read, and write—first the internet, then smartphones. Even so, he was resistant to AI in the classroom at first.

“A year ago, I was convinced that I was going to ride out the whole AI thing, and not even bother with it,” Kannan said.

That changed when he became part of a cohort of ���Ķ���vlog examining how innovation is reshaping education with Teach Plus, a teacher-leadership organization. So much of the AI conversation in his building, Kannan said, was focused on cheating. He wanted to move past that with students to explore “promise and possibility.”

The district had involved students in decision-making processes around technology before, said Michael Arensdorff, the school system’s chief technology officer. They’ve convened tech advisory groups over the past 10 years for input into instructional and infrastructure decisions, he said.

“Once kids are there, it completely changes the conversation,” Arensdorff said. Parents and ���Ķ���vlog might come with strong opinions, he said, but having students in the room refocuses the conversation around kids’ needs.

Kannan’s project, though, was more in-depth than other student voice initiatives the district had taken on in the past.

Students would have to go through acceptable use training with district tech leadership, and then have Google’s Gemini added to their school-issued devices for the year. They would give up their lunches and recesses twice a week to work on assignments Kannan designed, and then eventually develop their own projects. And they’d be expected to do extra homework.

When Kannan approached the students in October, some were skeptical at first, they said in interviews.

He said he wanted to talk to them about something related to AI, and immediately, a few 8th graders thought he was accusing them of using it to cheat. “We were terrified,” said Kennedy Lewis, 14, laughing.

“We got really worried, and we were still skeptical, even when we joined the group, because AI is always seen as bad, bad, bad,” said Viv.

Though surveys show that almost half of middle schoolers use AI for homework, they’re also wary of how the technology might be affecting them. Forty-eight percent of middle school students said they were concerned about AI harming their critical thinking skills in a 2025 survey from the RAND Corp. In a , almost a third of Gen Z say they’re angry about AI growth, and about 4 in 10 say they’re anxious about the trajectory the technology is taking.

It’s a worry a lot of her classmates share, said Kennedy. “Now that I’ve been in this cohort, it’s changed my perspective,” she said.

Technology can’t replace human judgment, students say

Kannan’s first assignment was a history lesson: Ask the chatbot to assume the persona of a figure from Reconstruction, and interview them about their life and experiences.

It gave students an opportunity to get comfortable with the platform and practice prompting, he said. The project’s second phase was more open-ended. Kannan asked students: If you had access to this tool in your everyday life, what would you do with it?

One student worked with Gemini to craft a 30-day roadmap that would help her get better at test-taking. Another built a plan to scale a business idea. (Other projects underscored how students’ fluency with AI could create new challenges for schools to manage—one boy, for instance, coded an app to control his home computer from his school Chromebook.)

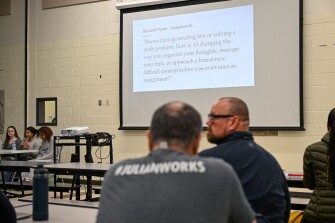

In their presentation to the teachers, the students returned often to questions of ethics.

“The transformation here isn’t just about smarter technology, it’s about creating systems and mindsets that respect boundaries,” said Lailah Kamal, 14.

That’s the responsibility of AI developers, but also users, students said.

“You can’t really start a conversation without having your own thought,” said Ava Kamenski, 13, talking about using AI as a writing or thinking partner. “But once you do, then you can work together with AI to create something that’s still yours.”

AI can also help students explore a topic from more angles, said Rae Kamenski, 13. “It’s like, ‘Hey, let’s look at a few different possibilities to start this assignment,’ and it can push you to think harder,” she said.

This is a core question about acceptable use of AI in school classrooms: Does asking AI to brainstorm with you or build on your thoughts help students think more deeply? Or does it short-circuit the thinking process? Not all ���Ķ���vlog and experts come to the same conclusion.

While some researchers warn that involving AI in the drafting stages of writing an essay, for instance, could shortchange learning, some teachers encourage students to use AI as a thought partner.

It was clear, the student presenters said, that not all teachers in the audience shared their views. One student said she saw one of her teachers in the crowd with a skeptical frown, seemingly “thinking of rebuttals.”

But ultimately, the students said, AI still can’t replace human judgment—especially that of their teachers.

“Your teachers will actually laugh at your jokes, and make you feel important, and make you feel loved,” said Josie Baker, 14. “I don’t think AI could ever take that away.”